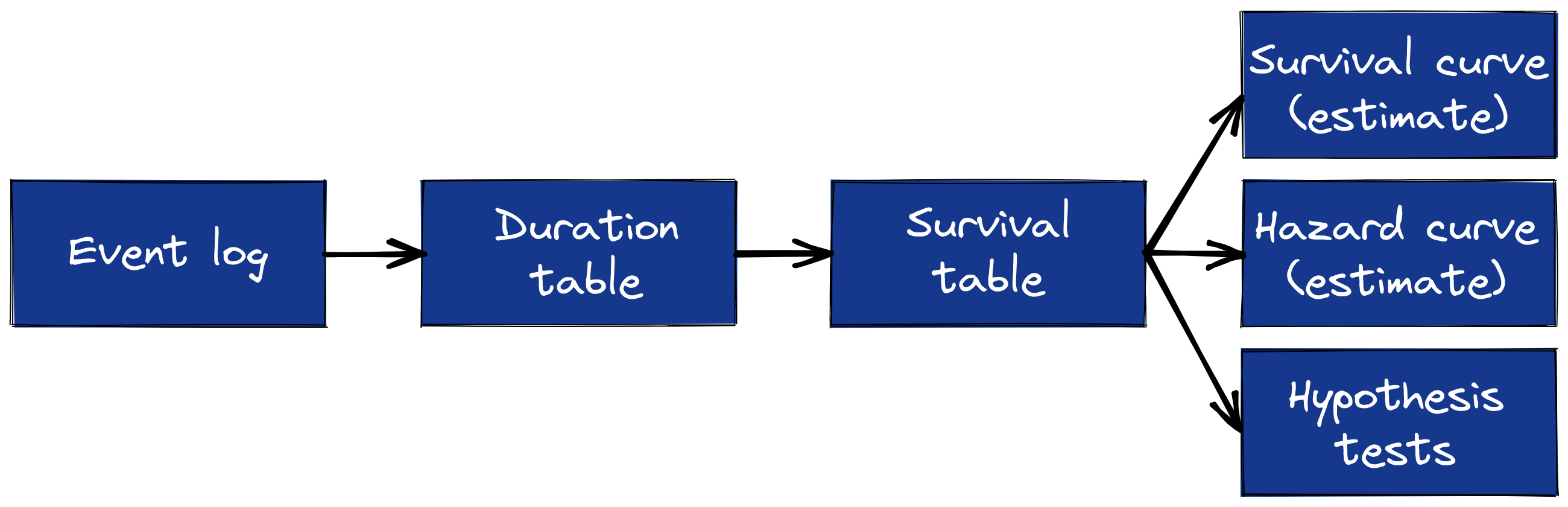

The survival table is the workhorse of univariate survival analysis. Previously, I showed to build a duration table from an event log; now I show how to take the next steps, from duration table to survival table and estimates of survival and hazard curves.

Python

survival analysis

Author

Brian Kent

Published

July 15, 2021

The survival table is the workhorse of univariate survival analysis; it’s the last step before estimating survival and cumulative hazard curves and running hypothesis tests. I showed previously how to go from an event log to a duration table, but I skipped the survival table step by using the Python packages lifelines and scikit-survival to get survival and hazard curve estimates. In this post, I’ll go back and show to do the middle step of building the survival table from a duration table.

Once we have the survival table, it’s pretty easy to compute survival and cumulative hazard curve estimates, so I’ll show that too.

The sequence of data and analysis artifacts in univariate survival analysis. Image by author.

A duration table refresher

To illustrate building a duration table from an event log, I used the Retail Rocket dataset from Kaggle. This dataset is an event log where each of the 2.8 million rows in this event log represents a user’s interaction with a product on the Retail Rocket website. We’re interested in the duration of time until a user’s first completed transaction.

import pandas as pddurations = pd.read_parquet("retailrocket_durations.parquet")durations.head()

entry_time

endpoint_time

final_obs_time

endpoint_observed

duration_days

visitorid

0

2015-09-11 20:49:49.439

NaT

2015-09-18 02:59:47.788

False

6.256925

1

2015-08-13 17:46:06.444

NaT

2015-09-18 02:59:47.788

False

35.384506

2

2015-08-07 17:51:44.567

NaT

2015-09-18 02:59:47.788

False

41.380593

3

2015-08-01 07:10:35.296

NaT

2015-09-18 02:59:47.788

False

47.825839

4

2015-09-15 21:24:27.167

NaT

2015-09-18 02:59:47.788

False

2.232878

Each row represents a Retail Rocket user; the important columns are endpoint_observed, which indicates whether the user made a transaction during the observation window, and duration_days, which is the time from the customer’s first visit to their first transaction, if applicable, or the censoring time, which is the most recent timestamp in the raw event log. The basic intuition being that for users who still haven’t completed a transaction, we know the time-to-transaction is at least as long as the duration we have observed so far.

What’s a survival table?

A survival table shows many study subjects still “at-risk” of experiencing the outcome event at each duration and how many did experience the event at each duration.1 It also shows how many subjects were censored, although the definition is a little trickier. The censored subjects listed in each row are those whose durations were greater than or equal to that row and less than the next row’s duration.

Here’s the survival table for the duration table above; this is where we want to end up.

Let’s look at the fourth row, for more concrete intuition. The at_risk column shows that 1,387,066 users had not yet made a transaction two days after they entered the system.2.] The num_obs column shows that of those, 9,605 had a duration between 2 and 3 days; 172 users experienced the outcome event, i.e. made a transaction on the 3rd day, and 9,433 were censored.

Let’s look more closely at the censored column. Notice the row with duration_days of 125. That row shows that 10,196 users had a recorded duration of 125 days, but the number of censored users is 45,118—how can that be possible?

Remember what I said above about the censored count including subjects whose censoring time is at least as long as the current row’s duration and less than the next row’s duration. So those 45,118 censored users have a duration of at least 125 days and less than 129 days.

So this is where we want to end up; how do we get there?

From durations to survival

The first step is to count the number of subjects who experienced the event at each duration and the total number of subjects at each duration. This step also establishes the row index for the final survival table; to make the row index nice and clean, I first round the duration days up to the next whole number.3

The number of subjects at risk in each row is the complement of the cumulative sum of subjects with shorter recorded durations. That’s a mouthful and the code is equally non-intuitive, but it’s easier to see in the output.

The total number of users in the system is 1,407,580, so this is the number at-risk at time zero. Of those users, 118 completed a transaction right at time 0 (i.e. their first product interaction was a transaction), so the number of users at risk of transacting on the first day—excluding time 0 itself—is the difference: 1,407,462.

14,536 users had a recorded duration of 1 day, either because they made their first transaction or they were censored in that time (because they entered the system on the last day of the observation window). So the number of users at risk of making a transaction after one full day is

\[

1,407,580 - (118 + 14,536) = 1,392,926

\]

which is reflected in the third row.

The survival table only has rows for durations with at least one observed event, so we can remove the rows with zero events; these are durations where users were lost to observation only.

Code

survival = survival.loc[survival["events"] >0]

The number of censored subjects at each duration is the number of subjects at risk minus the number at risk in the next duration minus the number who experienced the event at the current duration.

This seems unnecessarily complicated at first; why not just subtract events from num_obs to get the number of censored subjects?

Remember that the censored column should include subjects whose censored duration is greater than or equal to the current duration and less than the next duration. Because we’ve removed rows with no observed events, just subtracting the two columns would incorrectly ignore subjects censored at durations when nobody made a transaction.

Estimating survival and hazard functions

A common goal for univariate survival analysis is to plot a Kaplan-Meier estimate of the survival curve. The Kaplan-Meier estimator is

where \(d_i\) is the number of observed events at time \(t_i\) and \(n_i\) is the number of subjects at risk at \(t_i\). Computing this is easy once we have the survival table, using the Pandas Series cumprod method. The plotting code is included but folded by default because it’s a bit verbose.

Kaplan-Meier estimate of the survival curve for Retail Rocket time-to-first-transaction.

Because the fraction of users in this demo who make a transaction is so small (but realistic!), it can be helpful to flip the survival probability upside down, and call it a conversion rate (officially, the cumulative incidence function)

As I showed in the previous article about building duration tables, packages like lifelines and scikit-survival have functions to compute the Kaplan-Meier and Nelson-Aalen outputs, but it’s helpful to know how to compute the intermediate survival table, for a couple of reasons. For one, the survival table is also the basis for hypothesis tests about group differences in survival or hazard rates, and it’s important to have a strong intuition about how those tests work when applying them. Second, sometimes we want to compute the survival table without using 3rd party packages, i.e. when working in SQL.

Footnotes

Survival analysis concepts have some of the worst names in the business. Names like survival, hazard, and at-risk imply the outcome of interest is bad and suggest that all subjects will eventually experience the outcome, neither of which is true in general. For the Retail Rocket example in this post, conversions are good and most users never complete a transaction. I think we’re stuck with the bad names, though, so try to remember that “at-risk” means at risk for completing a first transaction, and “survival” means a user has not yet made any transactions.↩︎

I rounded the duration days up to the next whole number, so the survival table would have a countable row index. So, for example, a duration_days entry of 3 means the user’s true duration was in the interval (2, 3↩︎

The code in this article should work with Python 3.8, numpy 1.21.0, and pandas 1.3.0.↩︎

Source Code

---title: "How to construct survival tables from duration tables"author: "Brian Kent"date: "2021-07-15"image: survival_process.pngcategories: [Python, survival analysis]description: | The survival table is the workhorse of univariate survival analysis. Previously, I showed to build a duration table from an event log; now I show how to take the next steps, from duration table to survival table and estimates of survival and hazard curves.format: html: include-in-header: text: <link rel="canonical" href="https://www.crosstab.io/articles/durations-to-survivals/">---**The _survival table_ is the workhorse of univariate survival analysis; it's the laststep before estimating survival and cumulative hazard curves and running hypothesistests.** I [showed previously][events-durations-python] how to go from an event log to a**duration table**, but I skipped the survival table step by using the Python packages[lifelines][lifelines] and [scikit-survival][sksurv] to get survival and hazard curveestimates. In this post, I'll go back and show to do the middle step of building thesurvival table from a duration table.Once we have the survival table, it's pretty easy to compute survival and cumulativehazard curve estimates, so I'll show that too.## A duration table refresherTo illustrate building a duration table from an event log, I used the [Retail Rocketdataset][retailrocket-data] from Kaggle. This dataset is an event log where each of the2.8 million rows in this event log represents a user's interaction with a product on theRetail Rocket website. We're interested in the duration of time until a user's firstcompleted transaction.The duration table I [computed previously][events-durations-python] for this datasetlooks like this:```{python}import pandas as pddurations = pd.read_parquet("retailrocket_durations.parquet")durations.head()```Each row represents a Retail Rocket user; the important columns are `endpoint_observed`, which indicates whether the user made atransaction during the observation window, and `duration_days`, which is the time from the customer's first visitto their first transaction, if applicable, or the **censoring time**, which is the mostrecent timestamp in the raw event log. The basic intuition being that for users whostill haven't completed a transaction, we know the time-to-transaction is *at least* aslong as the duration we have observed so far.## What's a survival table?A survival table shows many study subjects still “at-risk” of experiencingthe outcome event at each duration and how many did experience the event at eachduration.^[Survival analysis concepts have some of the worst names in the business. Names like *survival*, *hazard*, and *at-risk* imply the outcome of interest is *bad* and suggest that all subjects will eventually experience the outcome, neither of which is true in general. For the Retail Rocket example in this post, conversions are good and most users never complete a transaction. I think we're stuck with the bad names, though, so try to remember that “at-risk” means at risk for completing a first transaction, and “survival” means a user has not yet made any transactions.] It also shows how manysubjects were censored, although the definition is a little trickier. The censoredsubjects listed in each row are those whose durations were greater than or equal to thatrow and less than the next row's duration.Here's the survival table for the duration table above; this is where we want to end up.``` at_risk num_obs events censoredduration_days 0 1407580 118 118 01 1407462 14536 9451 50852 1392926 5860 379 54813 1387066 9605 172 94334 1377461 11092 133 10959... ... ... ... ...124 156580 11086 1 11085125 145494 10196 1 45118129 100375 11691 2 11689130 88684 9011 1 9010131 79673 6990 1 79672[124 rows x 4 columns]```Let's look at the fourth row, for more concrete intuition. The `at_risk` column shows that 1,387,066 users had not yet made atransaction two days after they entered the system.^[I rounded the duration days up to the next whole number, so the survival table would have a countable row index. So, for example, a `duration_days` entry of 3 means the user's true duration was in the interval (2, 3].] The `num_obs` column shows thatof those, 9,605 had a duration between 2 and 3 days; 172 users experienced theoutcome event, i.e. made a transaction on the 3rd day, and 9,433 were censored.Let's look more closely at the `censored` column. Notice the row with `duration_days` of 125. That row shows that 10,196 users had a recorded duration of 125 days, but the number of censored users is 45,118---how can that be possible?Remember what I said above about the censored count including subjects whose censoringtime is at least as long as the current row's duration and less than the next row'sduration. So those 45,118 censored users have a duration of at least 125 days and lessthan 129 days.So this is where we want to end up; how do we get there?## From durations to survivalThe first step is to count the number of subjects who experienced the event at eachduration and the total number of subjects at each duration. This step also establishesthe row index for the final survival table; to make the row index nice and clean, Ifirst round the duration days up to the next whole number.^[The code in this article should work with Python 3.8, numpy 1.21.0, and pandas 1.3.0.]```{python}import numpy as npdurations['duration_days'] = np.ceil(durations['duration_days']).astype(int)grp = durations.groupby('duration_days')survival = pd.DataFrame({"num_obs": grp.size(), "events": grp["endpoint_observed"].sum()})```The number of subjects at risk in each row is the *complement* of the cumulative sum ofsubjects with shorter recorded durations. That's a mouthful and the code is equallynon-intuitive, but it's easier to see in the output.```{python}num_subjects =len(durations)prior_count = survival["num_obs"].cumsum().shift(1, fill_value=0)survival.insert(0, "at_risk", num_subjects - prior_count)print(survival.head())```The total number of users in the system is 1,407,580, so this is the number at-risk attime zero. Of those users, 118 completed a transaction right at time 0 (i.e. theirfirst product interaction was a transaction), so the number of users at risk oftransacting on the first day---excluding time 0 itself---is the difference: 1,407,462.14,536 users had a recorded duration of 1 day, either because they made their firsttransaction or they were censored in that time (because they entered the system on thelast day of the observation window). So the number of users at risk of making atransaction after one full day is$$1,407,580 - (118 + 14,536) = 1,392,926$$which is reflected in the third row.The survival table only has rows for durations with at least one observed event, so wecan remove the rows with zero events; these are durations where users were lost toobservation only.```{python}survival = survival.loc[survival["events"] >0]```The number of censored subjects at each duration is the number of subjects at risk minusthe number at risk in the next duration minus the number who experienced the event atthe current duration.```{python}survival["censored"] = ( survival["at_risk"]- survival["at_risk"].shift(-1, fill_value=0)- survival["events"])print(survival)```This seems unnecessarily complicated at first; why not just subtract `events` from `num_obs` to get the number of censored subjects?Remember that the censored column should include subjects whose censored duration is*greater than* or equal to the current duration and less than the next duration. Becausewe've removed rows with no observed events, just subtracting the two columns wouldincorrectly ignore subjects censored at durations when nobody made a transaction.## Estimating survival and hazard functionsA common goal for univariate survival analysis is to plot a [Kaplan-Meier][kaplan-meier]estimate of the survival curve. The Kaplan-Meier estimator is$$\widehat{S}(t) = \prod_{i: t_i \leq t} 1 - \frac{d_i}{n_i}$$where $d_i$ is the number of observed events at time $t_i$ and $n_i$ is the numberof subjects at risk at $t_i$. Computing this is easy once we have the survival table,using the Pandas Series `cumprod` method. The plotting code is included but folded by default because it's a bit verbose.```{python}inverse_hazard =1- survival["events"] / survival["at_risk"]survival["survival_proba"] = inverse_hazard.cumprod()survival.reset_index(inplace=True)``````{python}#| code-fold: true#| fig-cap: "Kaplan-Meier estimate of the survival curve for Retail Rocket time-to-first-transaction."import plotly.graph_objects as gofig = go.Figure( go.Scatter( x=survival['duration_days'], y=survival['survival_proba'], line=dict(width=4, shape="hv") ))fig.update_layout( xaxis_title="Duration (days)", yaxis_title="Survival probability", margin=dict(l=0, t=10, r=0), font_size=16, xaxis_title_font_size=20, yaxis_title_font_size=20)fig.show()```Because the fraction of users in this demo who make a transaction is so small (butrealistic!), it can be helpful to flip the survival probability upside down, and call ita conversion rate (officially, the **cumulative incidence function**)```{python}survival["conversion_pct"] =100* (1- survival["survival_proba"])``````{python}#| code-fold: true#| fig-cap: "Kaplan-Meier estimate of conversion rate over time, also known as cumulative incidence. The y-axis is percentage points, so 0.9 means 0.9%, or 0.009."fig = go.Figure( go.Scatter( x=survival['duration_days'], y=survival['conversion_pct'], line=dict(width=4, shape="hv") ))fig.update_layout( xaxis_title="Duration (days)", yaxis_title="Conversion rate (%)", margin=dict(l=0, t=10, r=0), font_size=16, xaxis_title_font_size=20, yaxis_title_font_size=20)fig.show()```The cumulative hazard function is another important survival analysis output. The[Nelson-Aalen][nelson-aalen] estimator is:$$\widehat{H}(t) = \sum_{i: t_i \leq t} \frac{d_i}{n_i}$$This one is even easier to compute from the survival table; it's a one-liner:```{python}survival['cumulative_hazard'] = (survival['events'] / survival['at_risk']).cumsum()``````{python}#| code-fold: true#| fig-cap: "Nelson-Aalen estimate of cumulative hazard of a Retail Rocket user completing a transaction over the course of their account life."fig = go.Figure( go.Scatter( x=survival['duration_days'], y=survival['cumulative_hazard'], line=dict(width=4, shape="hv") ))fig.update_layout( xaxis_title="Duration (days)", yaxis_title="Cumulative hazard", margin=dict(l=0, t=10, r=0), font_size=16, xaxis_title_font_size=20, yaxis_title_font_size=20)fig.show()```## Final thoughtsOur final survival table plus univariate model outputs looks like this:``` at_risk num_obs events censored survival_proba conversion_pct cumulative_hazardduration_days 0 1407580 118 118 0 0.999916 0.008383 0.0000841 1407462 14536 9451 5085 0.993202 0.679819 0.0067992 1392926 5860 379 5481 0.992932 0.706843 0.0070713 1387066 9605 172 9433 0.992808 0.719156 0.0071954 1377461 11092 133 10959 0.992713 0.728742 0.007291... ... ... ... ... ... ... ...124 156580 11086 1 11085 0.991275 0.872488 0.008740125 145494 10196 1 45118 0.991268 0.873169 0.008747129 100375 11691 2 11689 0.991249 0.875145 0.008767130 88684 9011 1 9010 0.991237 0.876262 0.008779131 79673 6990 1 79672 0.991225 0.877506 0.008791[124 rows x 7 columns]```As I showed in the [previous article][events-durations-python] about building durationtables, packages like [lifelines][lifelines] and [scikit-survival][sksurv] havefunctions to compute the Kaplan-Meier and Nelson-Aalen outputs, but it's helpful to knowhow to compute the intermediate survival table, for a couple of reasons. For one, thesurvival table is also the basis for hypothesis tests about group differences insurvival or hazard rates, and it's important to have a strong intuition about how thosetests work when applying them. Second, sometimes we want to compute the survival tablewithout using 3rd party packages, i.e. when working in SQL.[events-durations-python]: ../events-to-durations[lifelines-util]: https://lifelines.readthedocs.io/en/latest/lifelines.utils.html#lifelines.utils.survival_table_from_events[lifelines]: https://lifelines.readthedocs.io/en/latest/index.html[sksurv]: https://scikit-survival.readthedocs.io/en/stable/[kaplan-meier]: https://en.wikipedia.org/wiki/Kaplan%E2%80%93Meier_estimator[nelson-aalen]: https://en.wikipedia.org/wiki/Nelson%E2%80%93Aalen_estimator[retailrocket-data]: https://www.kaggle.com/retailrocket/ecommerce-dataset